Data Preparation and Background

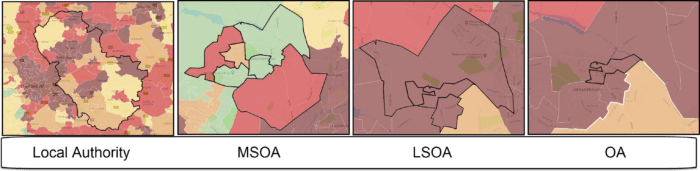

For the final 5 weeks of this module data from the 2021 Census is being used. Each file contains data reported at the ‘Output Area’ level. In the census there are a range of different ‘geographies’ that are used in the release of data.

Output Areas (OAs): These are the smallest of the geographies that data is published – there’s lots of census data at this level but actually they are very small (on average 250 households) so often data from non-census sources is released as larger units like LSOA or MSOA (see below).

Lower Layer Super Output Areas (LSOAs): LSOAs have an average population of 1500 people or 650 households.

Middle Layer Super Output Areas (MSOAs): MSOAs have an average population of 7500 residents or 4000 households.

This is roughly how they might look on a map.

So to create an LSOA, you group together OAs and to create an MSOA you group together LSOAs. This means they ‘nest’.

When you obtain a data file from the likes of the census you will have one row of data per geographic unit you are using. So for example a census file at the OA level will have the data pertaining to each OA as individual rows.

In the case of the data we are looking at each row in our table contains data on how many people have the attribute we are interested in, or what percentage of the population of that area share that attribute.

We can read CSVs into R using the read.csv() function. This requires us to identify the file location within our workspace, and also assign an object name for our data in R. For this we will be looking in the ‘All_2021_Census’ folder.

If you open that folder you should see the ‘Table_Metadata.csv’ file, which contains the full list of the table names and what they contain. You can see there’s a lot and that the codes are not terribly helpful without this lookup table! If you open one of the csvs with a file name beginning ‘ts’ you will see a column names OA, which is a good indication that ‘Output Areas’ are the unit of geography used in this file. These clues are helpful when it comes to looking at the spatial data files we need. For what it’s worth ‘ts’ is short for ‘Topic Summary’ which is the category that these census files have been given by the Office for National Statistics.

All the 2021 Census data can be found here (in case you’re interested), but as you will see a large subset of it is provided in the folder created for this class. The data cover the area of England and Wales (Scotland and Northern Ireland operate slightly different systems). For the module we will pick out a few tables and focus on smaller geographic areas. But don’t forget about the wealth of data in the folder as you may wish to use it in the future.

# read.csv() loads a csv, remember to correctly input the file location within your working directory

ethnicity_input <- read.csv("All_2021_Census/ts021.csv")

rooms_input <- read.csv("All_2021_Census/ts053.csv")

qualifications_input <-read.csv("All_2021_Census/ts067.csv")

employment_input <-read.csv("All_2021_Census/ts066.csv")Viewing data

With the data now loaded into RStudio, they can be observed in the objects window. These are large files because the cover all of England and Wales. In your ‘Environment’ tab you should see ‘188880 obs’ and then the differing numbers of columns (variables) within each table. Alternatively, you can open them with the View function as demonstrated below.

# to view the top cases of a data frame

View(employment_input)There are two problems with the data. Firstly, the column headers are still codes and are therefore uninformative. Secondly, the data is split between three different data objects so we’d like to combine into a single object.

Observing column names

To observe the column names for each dataset we can use a simple names() function. It is also possible to work out their order in the columns from observing the results of this function.

# view column names of a dataframe

names(employment_input)Selecting columns

The next step is to extract the columns we need. Revisit the ‘Table_Metadata’ csv for full text descriptions of each of the columns within the objects we just loaded in. We are going to grab the following:

| Object Name | Column Code | Text Description |

| ethnicity | OA | Output Area |

| ethnicity | ts0210001 | Total: All usual residents |

| ethnicity | ts0210018 | White: English, Welsh, Scottish, Northern Irish or British |

| rooms | OA | Output Area |

| rooms | ts0530001 | Occupancy rating for rooms: Total: All households |

| rooms | ts0530002 | Occupancy rating for rooms: Occupancy rating of rooms: +2 or more |

| qualifications | OA | Output Area |

| qualifications | ts0670001 | Highest level of qualification: Total: All usual residents aged 16 years and over |

| qualifications | ts0670007 | Highest level of qualification: Level 4 qualifications and above |

| employment | OA | Output Area |

| employment | ts0660001 | Economic activity status: Total: All usual residents aged 16 years and over |

| employment | ts0660013 | Economic activity status: Economically active (excluding full-time students): Unemployed |

In the process we are also going to convert the raw numbers we have into percentages. This is what the columns that correspond to the totals are needed for. We can revisit these data files if the raw counts are required, but for the practicals going forward percentages are all that are needed (don’t forget this when interpreting the data!). Why might percentages be better than the raw counts?

# Don't forget the reason we are dividing the counts by the totals then multiplying by 100 is to get the percentage of the population in each category.

ethnicity <- data.frame(OA=ethnicity_input$OA, White_British=(ethnicity_input$ts0210018/ethnicity_input$ts0210001)*100)

rooms <- data.frame(OA=rooms_input$OA, Low_Occupancy=(rooms_input$ts0530002/rooms_input$ts0530001)*100)

qualifications <- data.frame(OA=qualifications_input$OA, Qualification=(qualifications_input$ts0670007/qualifications_input$ts0670001)*100)

employment <- data.frame(OA=employment_input$OA, Unemployed=(employment_input$ts0660013/employment_input$ts0660001)*100)Here are more details about what each of the columns we have created contains:

| Variable | Description |

| White_British | The percentage of the population of each OA that report themselves as ‘White British‘. |

| Low_Occupancy | Occupancy rating provides a measure of whether a household’s accommodation is overcrowded or underoccupied. The ages of the household members and their relationships to each other are used to derive the number of rooms/bedrooms they require, based on a standard formula. The number of rooms/bedrooms required is subtracted from the number of rooms/bedrooms in the household’s accommodation to obtain the occupancy rating. An occupancy rating of -1 implies that a household has one fewer room/bedroom than required, whereas +1 implies that they have one more room/bedroom than the standard requirement. |

| Unemployed | Percentage of people unemployed (ie those of working age actively seeking employment). |

| Qualification | Percentage of people within an OA with Level 4 qualifications or above: degree (BA, BSc), higher degree (MA, PhD, PGCE), NVQ level 4 to 5, HNC, HND, RSA Higher Diploma, BTEC Higher level, professional qualifications (for example, teaching, nursing, accountancy) |

Joining data in R

We next want to combine the data into a single dataset. Joining two data frames together requires a common field, or column, between them. In this case it is the OA field (OA is short for Output Area). In this field each OA has a unique ID (or OA name), these IDs can be used to identify each OA between each of the datasets.

In R the merge() function joins two datasets together and creates a new object. As we are seeking to join four datasets we need to undertake multiple steps as follows.

#1 Merge Ethnicity and Rooms to create a new object called "merged_data_1"

merged_data_1 <- merge(ethnicity, rooms, by="OA")

#2 Merge the "merged_data_1" object with Employment to create a new merged data object

merged_data_2 <- merge(merged_data_1, employment, by="OA")

#3 Merge the "merged_data_2" object with Qualifications to create a new data object

census_data <- merge(merged_data_2, qualifications, by="OA")

#4 Remove the "merged_data" objects as we won't need them anymore

rm(merged_data_1, merged_data_2)

Our newly formed census_data object contains all four variables, plus the OA column.

Exporting Data

You can now save this file to your workspace folder. Remember R is case sensitive so take note of when object names are capitalised.

# Writes the data to a csv named "practical_data" in your file directory

write.csv(census_data, "worksheet_data/eng_wales_practical_data.csv", row.names=F)These are the kinds of steps you might have to go through when working with a new dataset. The crucial things you need to do are:

- Establish what geographic unit you are using – this may not be and ‘OA’ it could be a country or a county or ward or parish…the list is endless, and it depends on the country you are looking at. But once you know what the unit is you can Google a data source for joining the spatial data (see Week 1).

- Establish what the columns are and give them useful names.

- Make sure that each row corresponds to a single geographic unit. You don’t want multiple rows per unit as this will break the join to the spatial file (see Week 1 below).